MechanoBeat: Monitoring Interactions with Everyday Objects using 3D Printed Harmonic Oscillators and Ultra-Wideband Radar

Knowing how and when people interact with their surroundings is crucial for constructing dynamic and intelligent environments. Despite the importance of this problem, there still lacks an attainable and simple solution. Current solutions often require powered sensors on monitored objects or users themselves. Many such systems use batteries, which are costly and time-consuming to replace. Previous approaches not only have significant drawbacks and constraints in their use and operation, but also in their cost.

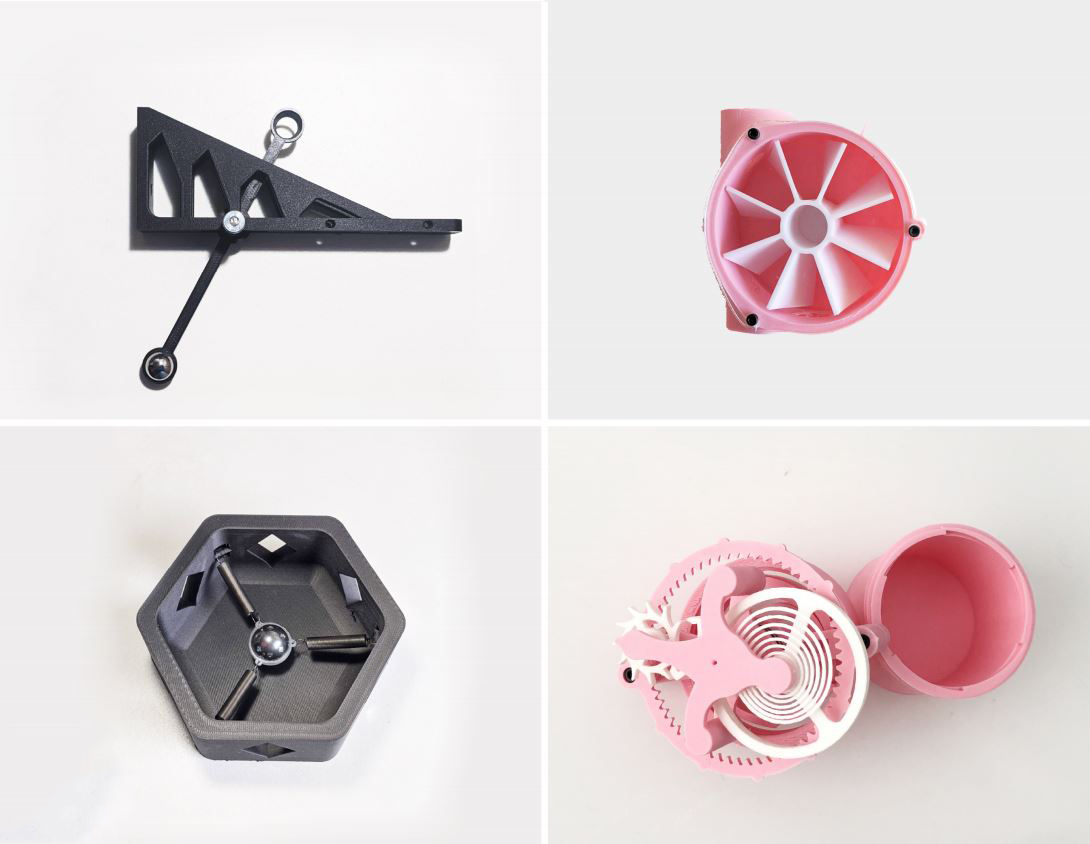

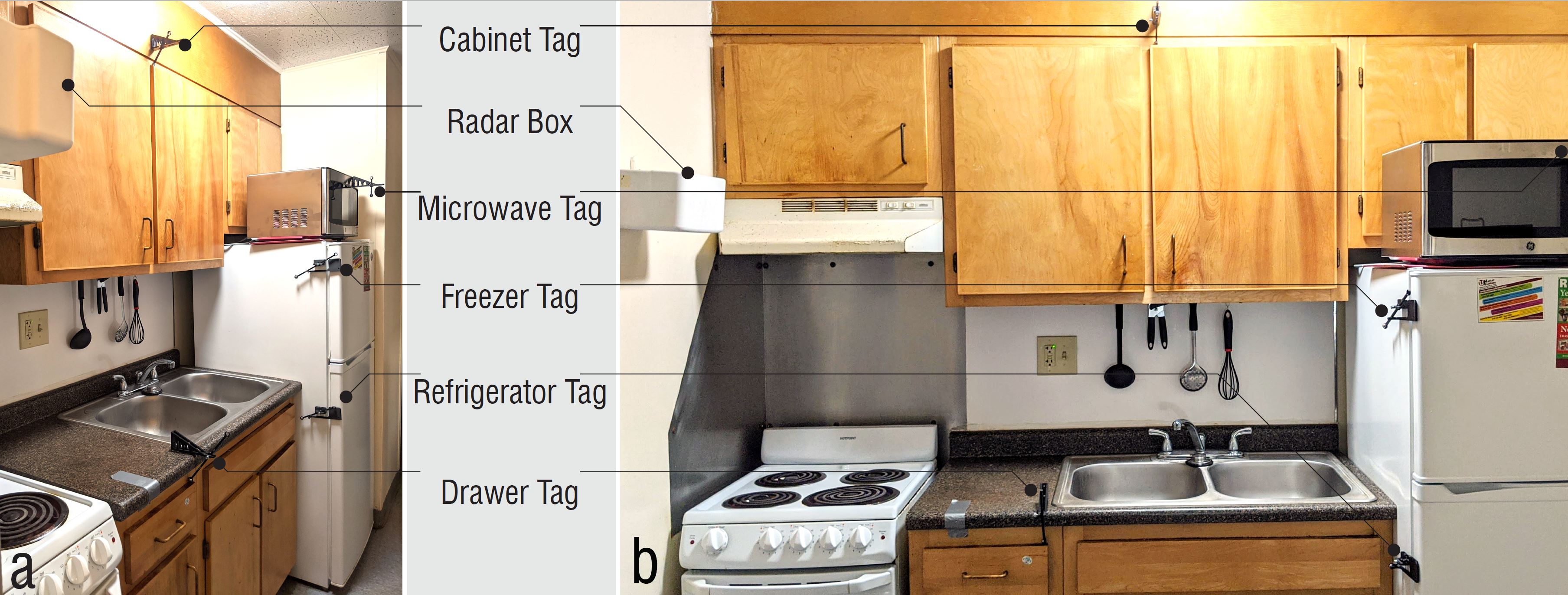

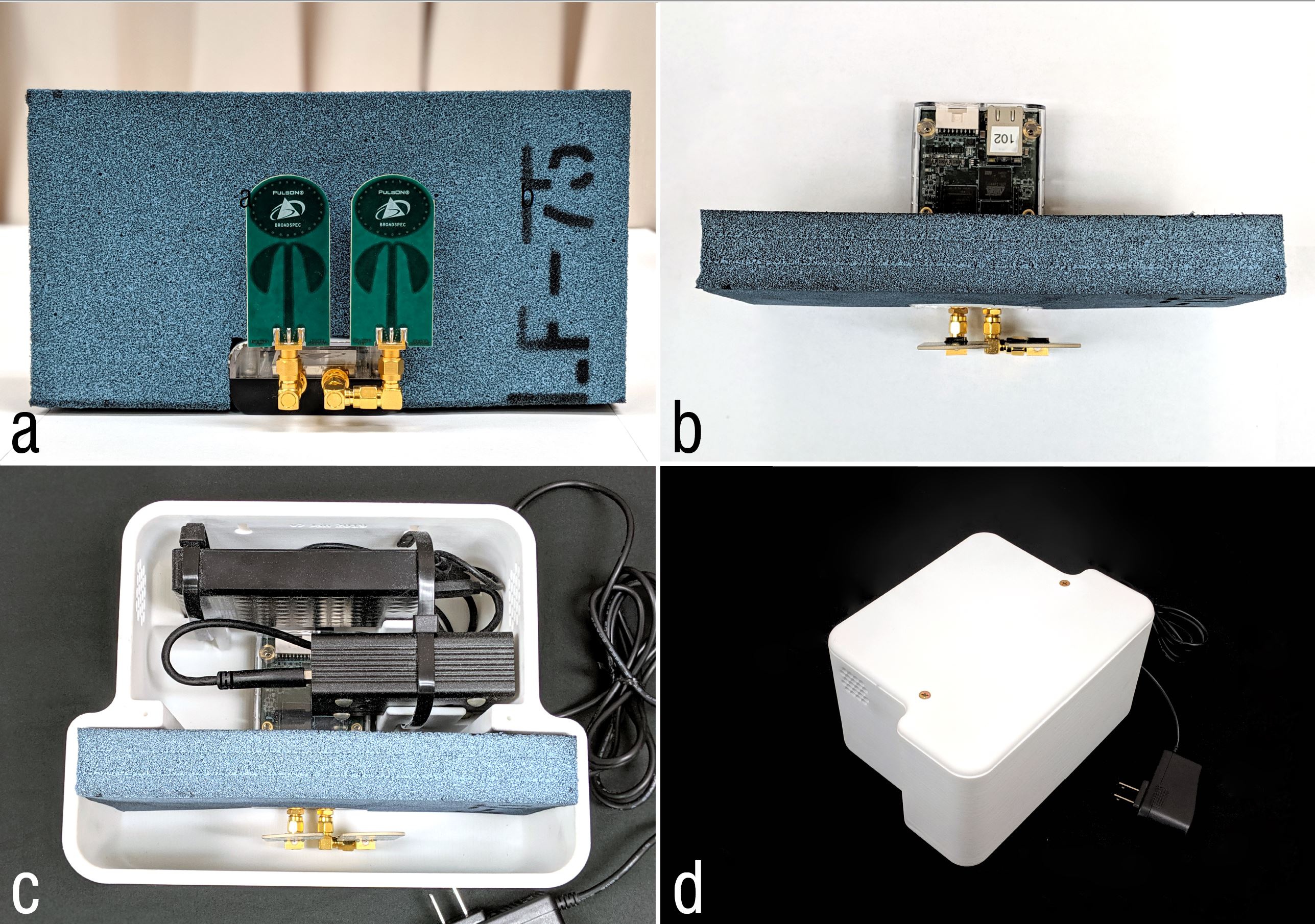

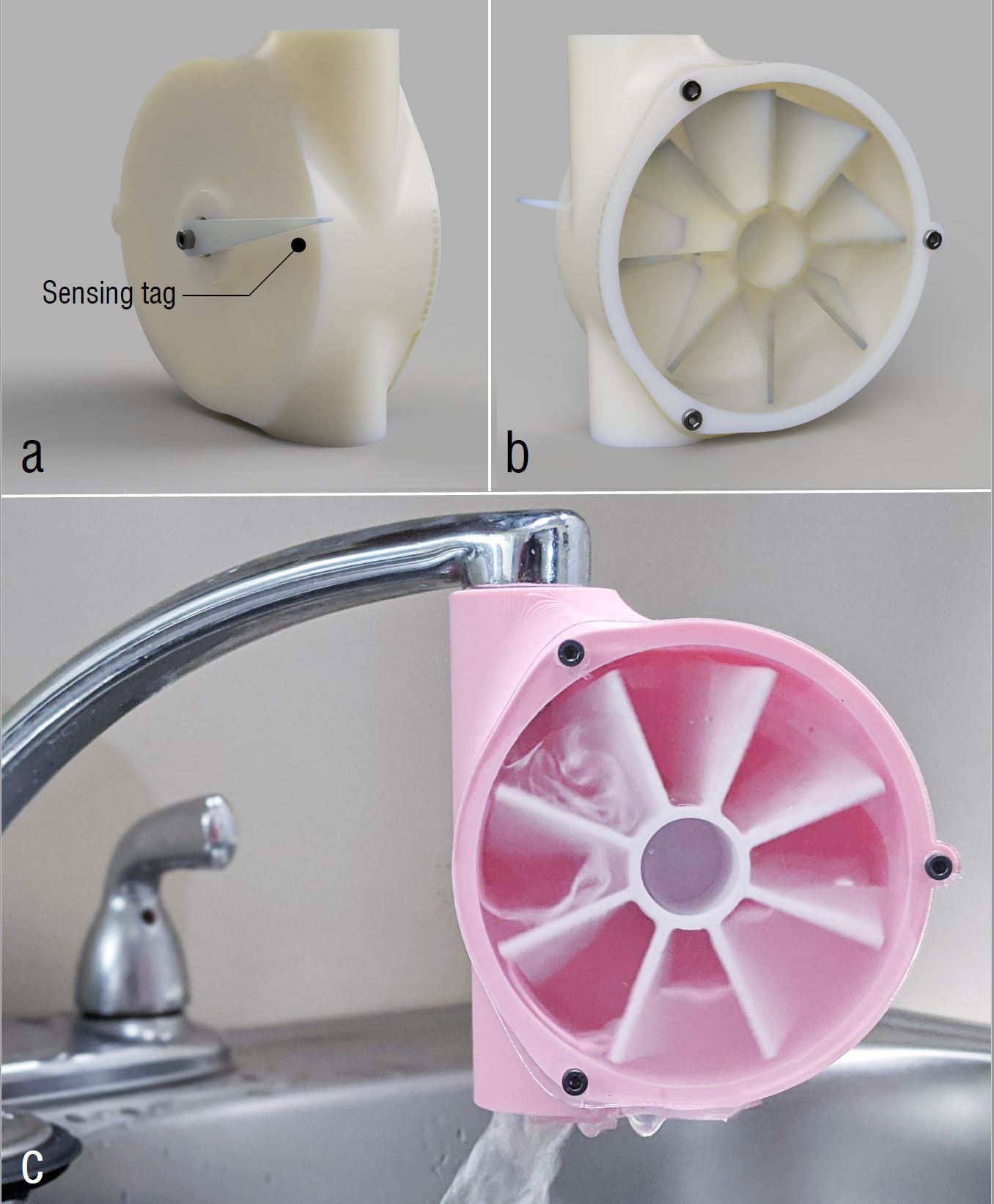

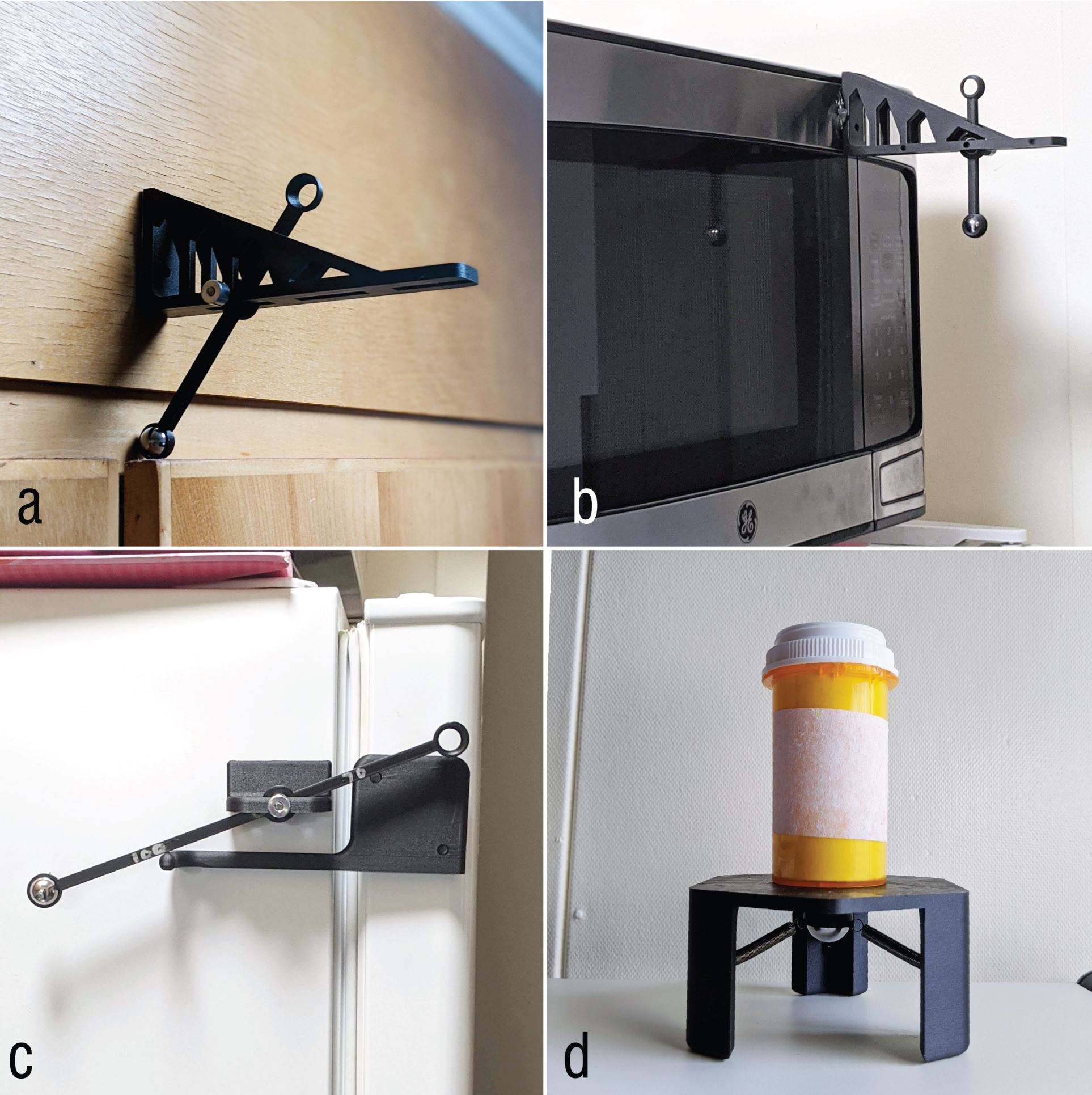

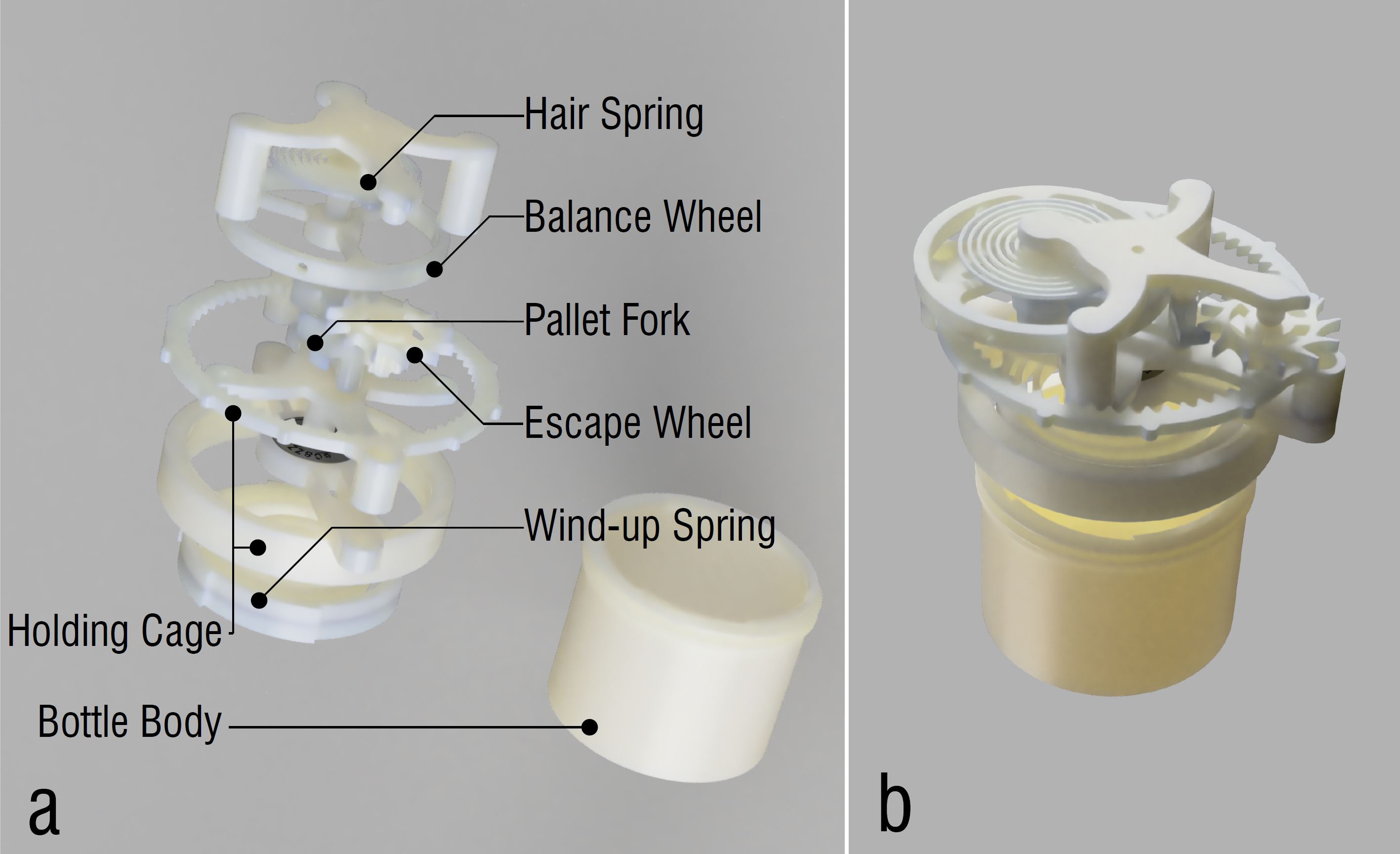

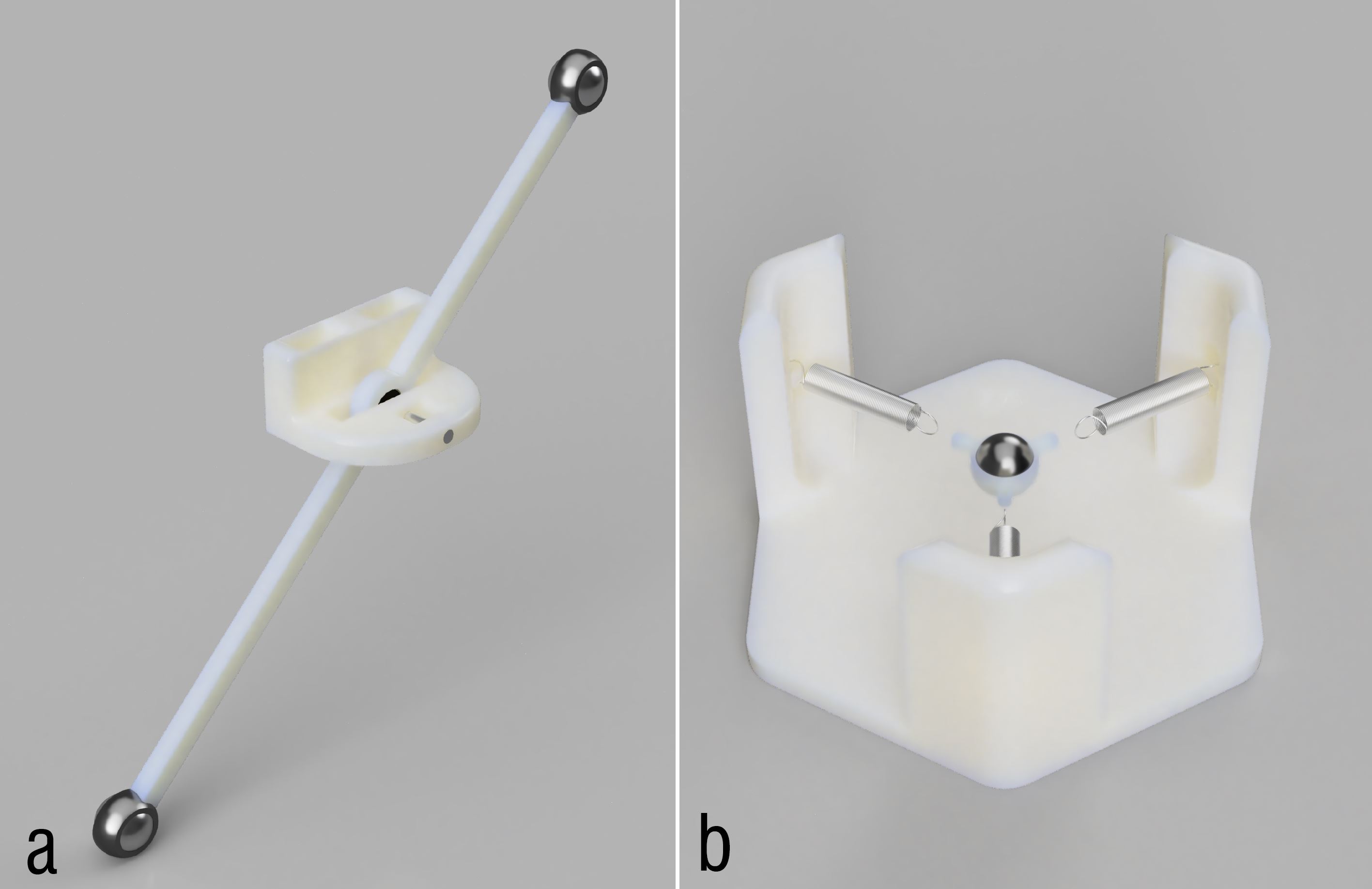

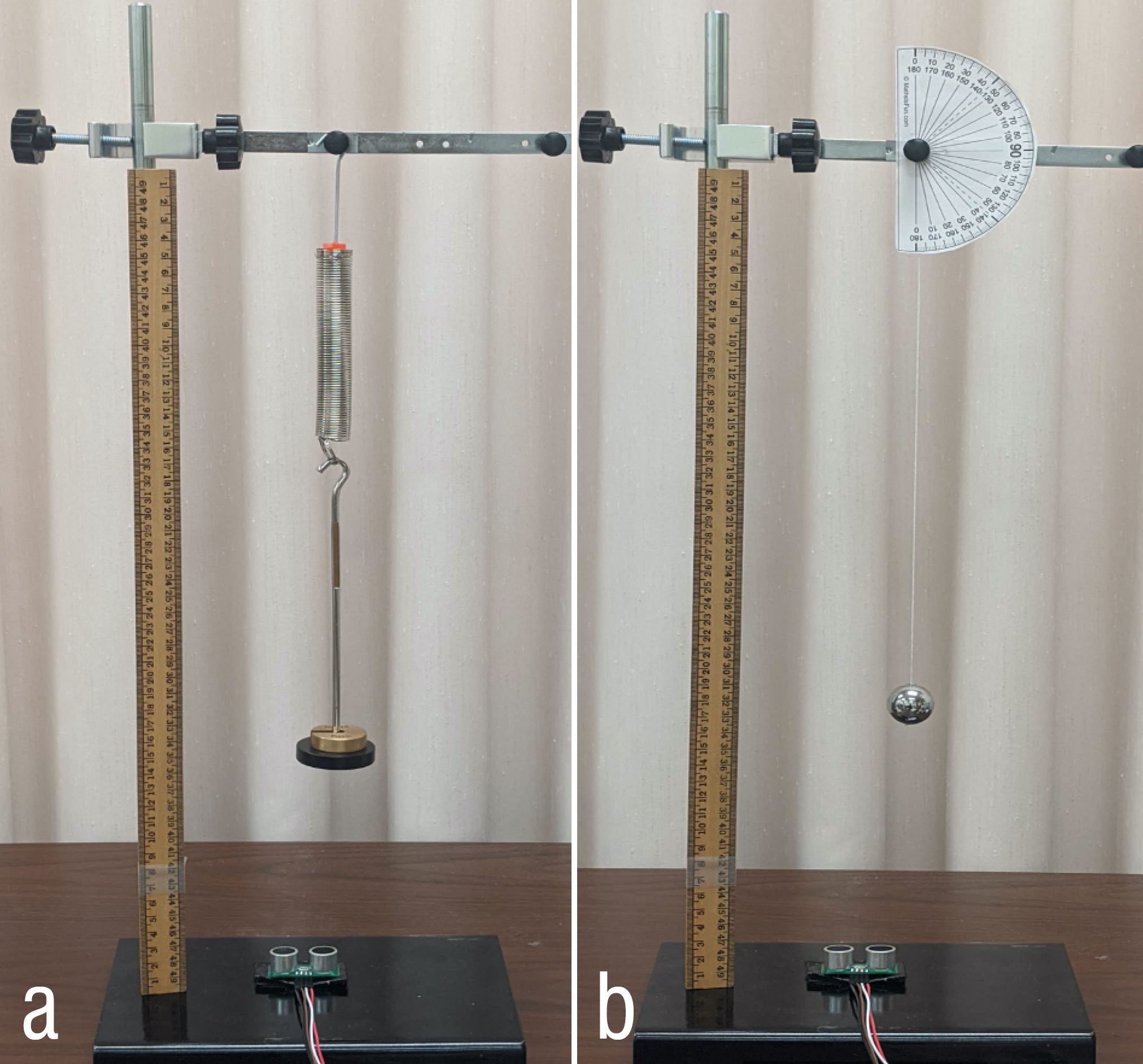

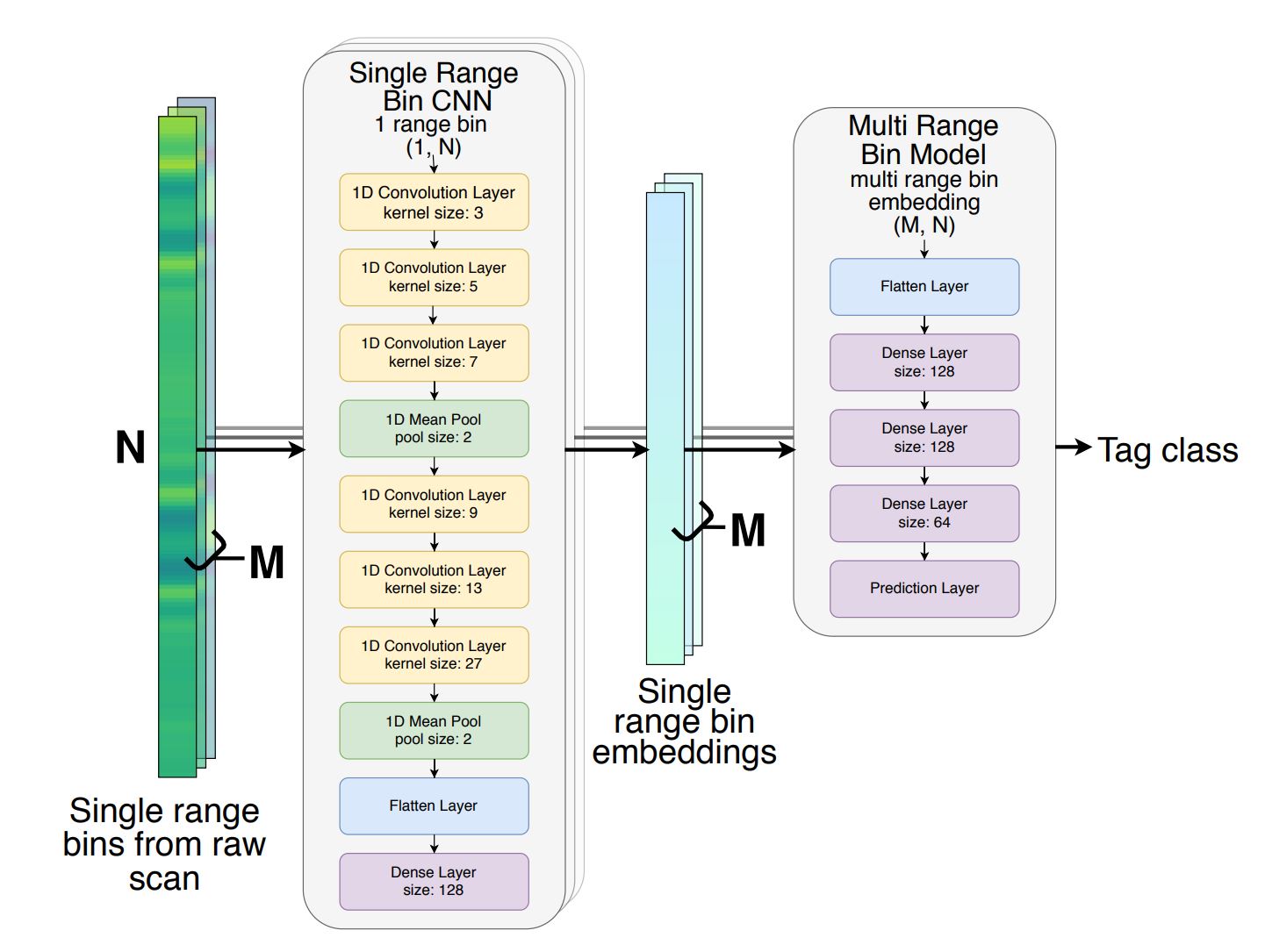

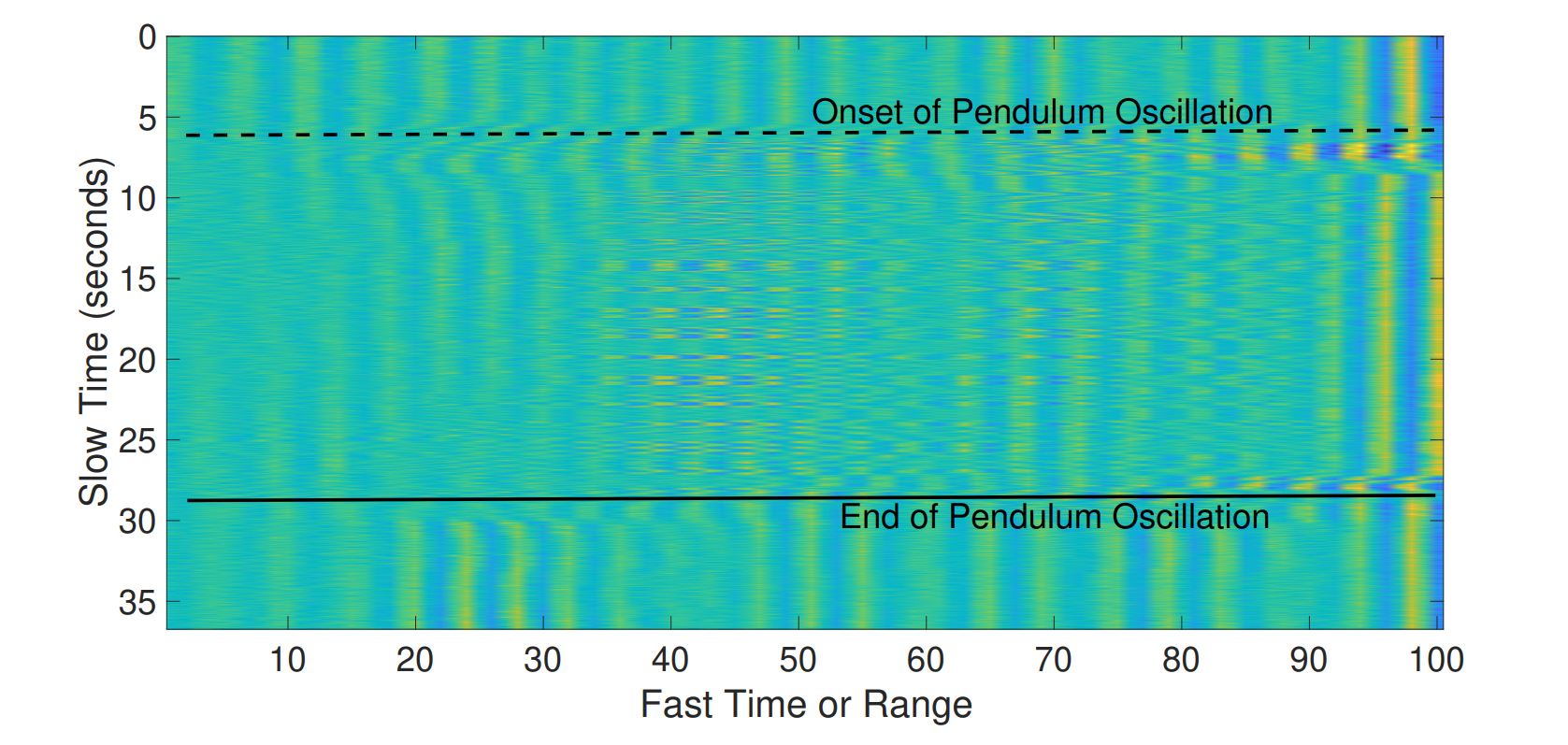

We present MechanoBeat, a complete platform for detecting and localizing object interactions using low-cost, electronics-free, and easy to install 3D printed tags that generate unique harmonic oscillations. These oscillations or "heartbeats" are detected by ultra-wideband radar arrays mounted on a wall. We develop an efficient signal processing and deep learning pipeline to locate and identify the active tags using only the reflected signals from the tag vibrations. The tags are attachable to both stationary and moveable objects and can be detected upon user interactions in noisy environments without a line of sight. Stationary tags can be used to detect interactions with stationary objects, for instance, kitchen appliances (freezers, microwaves, cabinets, drawers, etc.), washing machines, water faucets, and so on. These interactions are important for creating life logs, smarter homes, smarter workplaces, and potentially facilitate ambient assisted living. On the other hand, mobile tags can be attached to pill bottles, sugar jars, water bottles, etc., to track individuals' medication routines, sugar intake, and hydration status, respectively. We empirically show that our pipeline is robust to environmental noise and capable of inferring tag activity even when the radar is obscured.

Created by:

Md. Farhan Tasnim Oshim, Julian Killingback, Dave Follette, Huaishu Peng, Tauhidur Rahman

Research Paper:

In Proceedings of UIST '20.

For more detail, please visit the project page.